Oracle's Agentic Applications — the Payables Agent, HR Advisor Agent, Supply Planning Agent, and their growing family — represent the most significant shift in Oracle Fusion's technical architecture since the move to cloud. They are also the testing challenge that legacy Oracle testing tools are least equipped to handle.

Tricentis Tosca, UFT, Worksoft, and Opkey were all built for deterministic software: given input A, system produces output B, every time, reliably. You write a test that supplies input A, checks for output B, and passes or fails accordingly. This model works well for traditional Oracle processes — invoice matching, payroll calculation, GL posting — where the expected output for a given input is precisely defined.

Oracle AI agents break this model. They are probabilistic: given the same inputs, an AI agent may produce different recommendations depending on context, historical data, and model state. And they are adaptive: an agent's behaviour in production evolves as it processes more data. Testing a system that intentionally produces variable outputs requires a different testing philosophy — one that legacy tools have not been designed to support.

The Deterministic Test Failure Mode

The failure mode when legacy tools try to test Oracle AI agents is predictable. A Tricentis team testing the Oracle Payables Agent builds a test that supplies a specific invoice scenario and asserts a specific expected outcome — 'auto-approve'. When the agent routes the same invoice to a human approver instead (because its model has updated based on production data), the test fails. The QA team investigates, cannot identify a defect, and updates the test to accept either outcome. The test now provides no meaningful coverage.

This pattern — building deterministic tests for probabilistic systems, then weakening the assertions when the system behaves variably — produces test suites that pass consistently but validate nothing meaningful about the agent's compliance with business policy.

What Oracle AI Agent Testing Actually Requires

Effective Oracle AI agent testing requires three capabilities that purpose-built AI testing platforms provide and legacy tools do not.

Outcome envelope definition. Instead of asserting a single expected output, effective AI agent testing defines an acceptable outcome envelope — the range of decisions that comply with business policy. For the Payables Agent processing a £15,000 invoice against a £14,500 PO: auto-approval is acceptable if your tolerance is ±10%; escalation to AP manager is acceptable; auto-rejection is not acceptable. The test validates that the agent's decision falls within the acceptable envelope — not that it produces a specific single outcome.

SyntraFlow's test framework for Oracle AI agents supports outcome envelope definition natively — business users define the policy boundaries, and SyntraFlow validates agent decisions against those boundaries.

Scenario-based testing at scale. AI agent reliability is a statistical property — an agent that makes correct decisions 95% of the time is not adequate for compliance-critical processes where 5% errors represent significant financial or regulatory risk. Testing AI agent reliability requires running large scenario sets, not single test cases. Legacy tools built for small, precise test libraries are not architecturally optimised for the kind of scenario volume required.

Post-production monitoring. Because AI agents learn from production data, their behaviour evolves after go-live. A test suite that validated the Payables Agent in April may not correctly characterise its behaviour in August, because the agent has processed months of production invoices in the interim. Effective AI agent testing requires a continuous monitoring component — tracking agent decision patterns in production and flagging when behaviour drifts outside the defined acceptable envelope.

Legacy test automation platforms have no post-production monitoring capability. They were designed to run in test environments, not to observe and analyse production system behaviour.

SyntraFlow's Oracle AI Agent Testing Approach

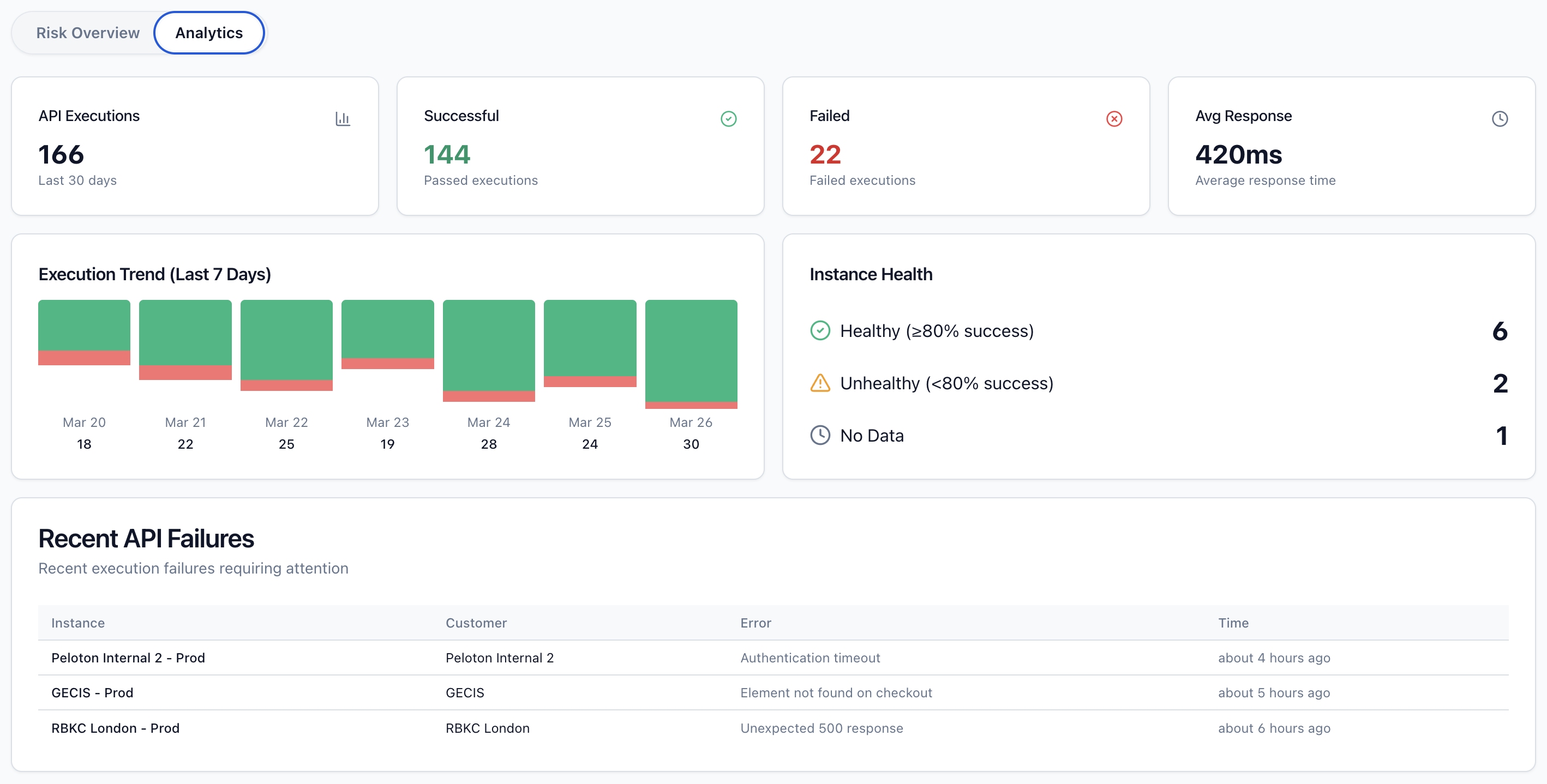

SyntraFlow's Oracle AI agent testing framework provides all three capabilities. The Scenario Library approach — pre-built sets of business scenarios with defined outcome envelopes for each Oracle AI agent — enables rapid scenario-based validation without building from scratch. SyntraFlow's production monitoring dashboard tracks Oracle AI agent decision patterns in production and surfaces drift alerts when behaviour moves outside defined boundaries.

For Oracle teams deploying 26A and 26B AI agents in Finance, HCM, and SCM, SyntraFlow provides the only Oracle testing approach specifically designed for the probabilistic, adaptive nature of AI agent behaviour.

Contact SyntraFlow to discuss your Oracle AI agent testing requirements — and to see how our Scenario Library approach compares to what your current testing platform offers for Oracle's new agentic capabilities.