There is a simple arithmetic problem at the heart of manual Oracle Fusion testing. A QA engineer executing Oracle test cases manually can complete 15-20 test cases per day — navigating to the right Oracle screen, entering test data, verifying the Oracle output, and recording the result. A medium Oracle Fusion deployment has 500-1,000 meaningful regression test cases. Running a full regression cycle manually requires 25-65 engineer-days — more than a month of one full-time QA engineer's capacity.

With four Oracle quarterly updates per year, this arithmetic is unsustainable. Manual regression testing for Oracle Fusion leaves most organisations with two bad choices: accept thin test coverage (run 100 tests instead of 1,000 and hope the untested 900 are fine) or accept extreme workload peaks (run all 1,000 tests by burning out your QA team every quarter).

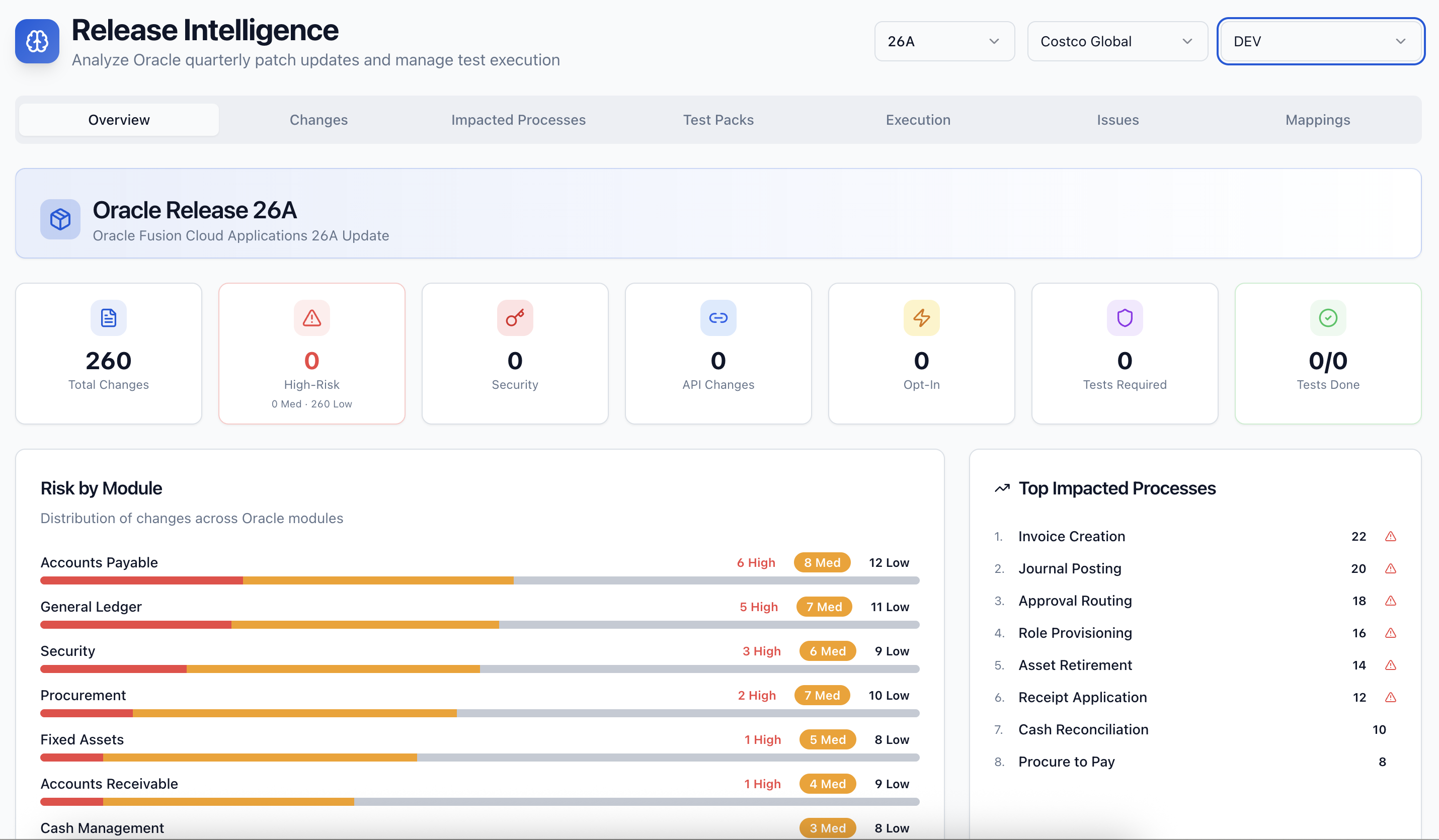

AI-driven test automation resolves this arithmetic. SyntraFlow's automation engine executes 500-1,000 Oracle test cases in 2-4 hours — the same tests that take a manual QA team 25-65 days. The productivity multiplier is not 10% or 50% better. It is 10x to 50x better.

What the Productivity Multiplier Actually Enables

The 10x productivity improvement is not just about doing the same work faster. It changes what Oracle QA teams can achieve.

More frequent regression cycles. With manual testing, most Oracle teams run a full regression cycle once per quarterly update — because that is all the capacity allows. With AI automation, teams run full regression cycles weekly against the Oracle non-production environment, catching configuration drift and integration issues 12 weeks before the next quarterly update rather than discovering them in the release window.

Larger test coverage. With manual testing, Oracle QA teams make coverage trade-offs — choosing which 100 of the 1,000 meaningful test cases to run given time constraints. With AI automation, all 1,000 run every cycle. Coverage gaps that manual testing accepts as necessary trade-offs disappear.

Better compliance validation. Compliance test scenarios — ZATCA e-invoice clearance, WPS SIF file validation, SOX journal entry controls — are often deprioritised in manual testing cycles because they are time-consuming to execute correctly. With automation, compliance tests run every cycle with the same rigour as functional tests.

Faster Oracle quarterly update cycles. Oracle teams using manual testing typically need 3-4 weeks to complete a quarterly update regression cycle. Teams using SyntraFlow's automation complete the same cycle in 3-5 days — freeing 2-3 weeks per quarter for the QA team to focus on new capability testing and process improvement.

The Productivity Math for a Real Oracle Team

Consider an Oracle QA team of 3 engineers supporting a 6-module Oracle Fusion deployment. Their current quarterly cycle:

Manual testing approach: 600 test cases × 1 hour each = 600 hours per cycle. At 3 engineers × 8 hours per day, that is 25 working days — 5 weeks — per quarterly cycle. With 4 cycles per year, 20 weeks of the year (38% of available capacity) is consumed by quarterly regression execution alone, before any new testing, defect investigation, or continuous improvement.

SyntraFlow automation approach: The same 600 test cases execute in 6 hours of automated run time. QA engineer time for the cycle: 2 days for test library review and update (using Release Intelligence impact analysis), 1 day for results review and defect triage. Total: 3 engineer-days per cycle. With 4 cycles per year, 12 engineer-days (6% of available capacity) for quarterly regression. The remaining 94% is available for coverage expansion, compliance testing, and higher-value QA work.

Comparing Against Competitor Platforms

Tricentis Tosca and UFT offer test automation that can provide similar execution speed benefits — but the comparison is not just about execution speed. It is about the total productivity equation including test maintenance.

For every Oracle quarterly update, Tosca and UFT teams spend 2-3 weeks updating test scripts broken by Oracle's UI changes. SyntraFlow's self-healing automation eliminates this maintenance overhead entirely — meaning the productivity comparison in the real world (execution speed minus maintenance overhead) is more favourable to SyntraFlow than raw execution speed alone suggests.

Contact SyntraFlow for a productivity analysis tailored to your Oracle team size, Oracle module footprint, and current testing approach. We will model the before-and-after productivity equation and give you the numbers for your business case.